Introduction: The Criticality of Measurement Integrity

Uncalibrated instruments pose severe risks in high-precision manufacturing. They can lead to product failure, expensive recalls, and audit non-compliance. Torque tester calibration serves as a critical defense against measurement drift. This drift often compromises safety-critical joints in aerospace and automotive assemblies. Furthermore, implementing an ISO 17025 torque calibration protocol ensures metrological traceability. It provides the documented evidence required to satisfy stringent industrial quality standards and regulatory bodies.

1. Torque Tester Overview and Industrial Significance

What primary function does a torque tester serve in a controlled manufacturing environment? This high-precision metrological instrument measures and verifies the output of torque-producing tools like wrenches and drivers. Internal transducers convert mechanical strain into electrical signals. The resulting digital or analog readout confirms if a tool meets its specified tolerance. Consequently, it prevents mechanical assembly failures in critical sectors. It remains the foundation of measurement reliability.

Torque testers, such as the Mountz LTT series or CDI Multitest systems, serve as the “master” reference point on the factory floor. They operate on the principle of Hooke’s Law, where the deformation of a sensing element is proportional to the applied torque. In the context of ISO 17025 torque calibration, these instruments must be significantly more accurate than the tools they are testing—typically by a ratio of 4:1.

Without consistent torque tester calibration, the reliability of the entire assembly process is called into question. If the tester provides an inaccurate baseline, every subsequent measurement is invalidated. This is particularly dangerous in “safety-critical” joints where improper fastening can lead to mechanical fatigue or vibration-induced loosening in aircraft components or automotive drivetrains.

2. The Technical Standard for Torque Tester Calibration

How is a standardized calibration performed to meet global industrial requirements? Standardized torque tester calibration follows the protocols outlined in ISO 17025 and ISO 6789, involving a multi-point verification across the device’s full scale. The process requires applying known, traceable torque loads using precision weights and lever arms to verify the accuracy, repeatability, and linearity of the instrument’s transducer.

The ISO 17025 Calibration Framework

Professional calibration follows a structured, multi-point verification hierarchy. Technicians compare the device against a known standard under strictly controlled conditions. This process ensures results are globally traceable and legally defensible for aerospace audits.

Thermal conditioning

Load cycling (3x)

Multi-point testing

Uncertainty report

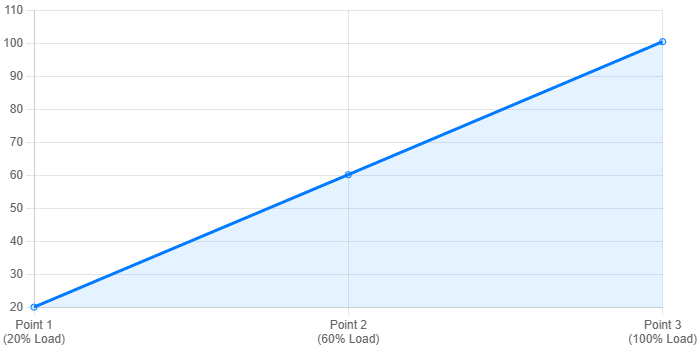

Multi-point verification trend showing typical deviation at 20%, 60%, and 100% capacity.

Step-by-Step Calibration Procedure

- Environmental Stabilization: The instrument must be placed in a temperature-controlled environment for at least 4 hours to minimize thermal expansion errors in the transducer material.

- Pre-loading: Apply the maximum rated capacity of the tester three times to “exercise” the internal strain gauges and stabilize the mechanical components.

- Zero-Point Calibration: Ensure the digital display or analog needle returns to an absolute zero state under no-load conditions to prevent offset errors.

- Verification Points: In accordance with ISO 17025 torque calibration standards, measurements are taken at 20%, 60%, and 100% of the maximum capacity. Five ascending and five descending readings are typically recorded.

- Calculations: The technician calculates the deviation, relative measurement uncertainty, and repeatability to determine if the device remains within the manufacturer’s specified tolerance.

Traceability and Measurement Uncertainty

Traceability is the unbroken chain of comparisons back to a national standard (such as NIST or PTB). In torque metrology, the uncertainty must account for variables including ambient temperature, friction in the loading system, and the resolution of the unit under test (UUT). The expanded uncertainty ($U$) is calculated using the coverage factor $k=2$, providing a 95% confidence level in the measurement results.

Definition: Metrological Traceability The property of a measurement result whereby the result can be related to a reference through a documented unbroken chain of calibrations, each contributing to the measurement uncertainty.

Definition: Measurement Uncertainty A non-negative parameter characterizing the dispersion of the quantity values being attributed to a measurand, based on the information used.

3. Establishing Torque Calibration Intervals

How often should an industrial torque tester undergo professional calibration? Optimal torque calibration intervals are determined by a combination of manufacturer recommendations, frequency of use, and the risk level of the application. While many manufacturers suggest a 12-month cycle, high-volume automotive or aerospace facilities often implement 6-month or even 3-month intervals to minimize the “risk window” between calibrations.

Establishing these intervals requires analyzing historical drift data. If a tester consistently shows minimal deviation during its annual check, the interval might be maintained. However, if the device requires significant adjustment, the interval must be shortened. Factors such as “out-of-tolerance” (OOT) conditions during an audit can force a retroactive review of all products touched by that tester since its last successful calibration.

Critical Factors for Interval Determination:

- Usage Frequency: Devices used for thousands of cycles per week experience faster mechanical fatigue.

- Environmental Stress: Exposure to dust, vibrations, or extreme temperature fluctuations necessitates more frequent checks.

- Regulatory Requirements: Specific contracts in defense or aerospace may mandate specific, non-negotiable intervals.

4. Torque Tester Troubleshooting and Maintenance

What are the most common signs that a torque tester requires immediate technical intervention? Frequent torque tester troubleshooting identifies issues like non-linear readings, failure to return to zero, or erratic digital displays caused by electromagnetic interference. If the device displays an ‘Overload’ error or shows inconsistent low-range results, the transducer likely suffered permanent damage or the internal electronics drifted

Professional Maintenance Protocols

To maintain the integrity of torque tester calibration, follow these expert guidelines:

- Transducer Care: Never exceed 110% of the rated capacity. Overloading causes plastic deformation of the strain gauge, requiring expensive factory repair.

- Connector Integrity: Inspect cables and pins for oxidation or bending. Weak signals between the transducer and the display unit are a common cause of measurement “noise.”

- Firmware Updates: Ensure the digital indicator is running the latest manufacturer software to provide accurate conversion algorithms for various units (N·m, lbf·ft, kgf·cm).

5. FAQ – Expert Technical Answers

1. Why is ISO 17025 torque calibration required for aerospace and automotive audits? ISO 17025 is an internationally recognized standard for laboratory competence. It ensures that torque tester calibration results are accurate and traceable. This accreditation is often a mandatory contract requirement. It proves that measurements are legally defensible.

2. How do usage patterns influence torque calibration intervals? Higher cycle counts increase mechanical fatigue on sensing elements. If a tester performs thousands of cycles weekly, shorten the torque calibration intervals. This prevents “out-of-tolerance” (OOT) conditions. These conditions could invalidate months of production data.

3. What is the most effective approach to torque tester troubleshooting for digital drift? Start by checking the power source and cable shielding. This rules out electromagnetic interference. If the drift persists, the transducer may have mechanical fatigue. This requires full recalibration and adjustment of zero-offset parameters.

4. Can temperature fluctuations affect my torque calibration results? Yes, thermal expansion changes the physical properties of the transducer. Standard ISO 17025 torque calibration requires strict temperature controls. These controls ensure results reflect true mechanical performance.

5. What is the difference between accuracy and measurement uncertainty? Accuracy is the closeness of a measurement to the true value. In contrast, measurement uncertainty defines the range where the true value lies. In professional torque tester calibration, reporting uncertainty is essential. It helps determine the risk of false acceptance.

6. Conclusion – Protecting Your Industrial Reputation

Consistent torque tester calibration safeguards your facility. It prevents the financial fallout of product failure. Adhering to ISO 17025 torque calibration standards is essential. It keeps your quality control beyond reproach. Monitor your equipment for drift regularly.

Protect Your Audit Status

Don’t wait for a failure. Adhere to strict torque tester calibration schedules to ensure quality and compliance.